In this post Jason Chao, PhD candidate at the University of Siegen, introduces Memespector-GUI, a tool for doing research with and about data from computer vision APIs.

In recent years, tech companies started to offer computer vision capabilities through Application Programming Interfaces (APIs). Big names in the cloud industry have integrated computer vision services in their artificial intelligence (AI) products. These computer vision APIs are designed for software developers to integrate into their products and services. Indeed, your images may have been processed by these APIs unbeknownst to you. The operations and outputs of computer vision APIs are not usually presented directly to end-users.

The open-source Memespector-GUI tool aims to support investigations both with and about computer vision APIs by enabling users to repurpose, incorporate, audit and/or critically examine their outputs in the context of social and cultural research.

What kinds of outputs do these computer vision APIs produce? The specifications and the affordances of these APIs vary from platform to platform. As an example here is a quick walkthrough of some of the features of Google Vision API…

Label, object and text detection

Google Vision API aims to label, identify objects and recognise text in images. “Labels” are descriptions that may apply to the whole image. “Objects” are objects found in the image. “Text” is any printed or handwritten text recognised in the image.

Image sample 1

Labels:

• Sky

• Building

• Crowd

Objects:

• Building

• Person

• Footwear

Text:

• DON’T SHOOT OUR KIDS

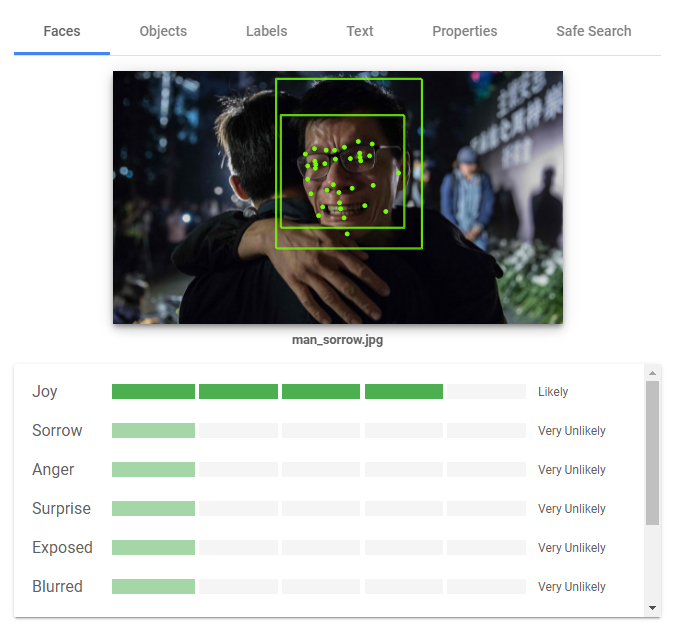

Face detection

Google Vision API also detects faces and recognises their expressions. Google Vision API currently detects four emotions: joy, sorrow, anger and surprise. The likelihood of each emotion is presented on a scale of 5 (very unlikely, unlikely, possible, likely, very likely).

Image sample 2

Face

• Joy: Very likely

• Sorrow: Very unlikely

• Anger: Very unlikely

• Surprise: Very unlikely

• Exposed: Very unlikely

• Blurred: Very unlikely

• Headwear: Very unlikely

Web detection

Google Vision API attempts to provide contextual information about an image. “Web entities” are the names of individuals and events associated with an image. “Matching images” are the URLs of images that are visually similar, fully matching or partially matching with the analysed image. In particular, the domain names of the URLs may be repurposed to study how one image is circulated on the web.

Image sample 3

Web entities:

• Secretary of State for Health and Social Care of the United Kingdom

• Kiss

• Closed-circuit television

• Matt Hancock

• Gina Coladangelo

• Girlfriend

…

Full matching image URL:

• thetimes.co.uk/…

Pages with full matching image:

• dailymail.co.uk/…

• theaustralian.com.au/…

• mirror.co.uk/…

…

Safety detection

Google Vision API is used for content moderation work. There are five flags for the safety of an image: Adult, Spoof, Medical, Violence and Racy. The likelihood of each flag is presented on a scale of 5 (very unlikely, unlikely, possible, likely, very likely).

Image sample 4

Safety:

• Adult: Very likely

• Spoof: Unlikely

• Medical: Unlikely

• Violence: Unlikely

• Racy: Very likely

Misrecognition, mislabelling, misclassification

As we know from recent research and investigations into into AI and algorithm, these machine-learning based processes of labelling, detection and classification can often go wrong, sometimes with troubling or discriminatory consequences. The following example shows that the facial expression of a man in sorrow is misrecognised as “joy”.

How to repurpose computer vision APIs?

The computer vision APIs do not have official user interfaces since they are not intended to interface with humans directly. Google Vision API has an official drag-and-drop demo to demonstrate its detection capability, but the demo’s features are very limited.

Memespector-GUI is a digital methods tool that helps researchers use and repurpose data from computer vision APIs – whether to facilitate analysis of image collections, to understand the circulation and social lives of images online or to compare and critique the operations of computer vision platforms and services. This tool currently enables users to gather image data from Google Vision API, Microsoft Azure Cognitive Services, Clarifai and other services.

Are they free?

Memespector-GUI is free and open-source. However, the use of commercial APIs is not necessarily free of charge. Yet, it does not mean that you have to pay. When you open an account with Google Cloud and Microsoft Azure, you will usually receive free credits, which may be enough to process thousands of images.

Resources

The following resources will guide you open accounts and get free credits (if applicable) with commercial vision APIs.

- Signing up for Google Cloud – https://github.com/jason-chao/memespector-gui/blob/master/doc/GetKeyFromGoogleCloud.md

- Signing up for Microsoft Azure – https://github.com/jason-chao/memespector-gui/blob/master/doc/GetKeyFromMicrosoftAzure.md

- Signing up for Clarifai – https://github.com/jason-chao/memespector-gui/blob/master/doc/GetKeyFromClarifai.md

After opening accounts with the commercial APIs, the following guide is a step-by-step manual on using Memespector-GUI to enrich your image datasets.

- README for Memespector-GUI – https://github.com/jason-chao/memespector-gui

The project was inspired by previous memespector projects from Bernhard Rieder and André Mintz, and developed with ideas, input and testing from Janna Joceli Omena.