The first of a two-part series providing critical considerations for reporting on environmental issues with social media data

By Thais Lobo, Rina Tsubaki, Liliana Bounegru, Jonathan Gray and Gabriele Colombo

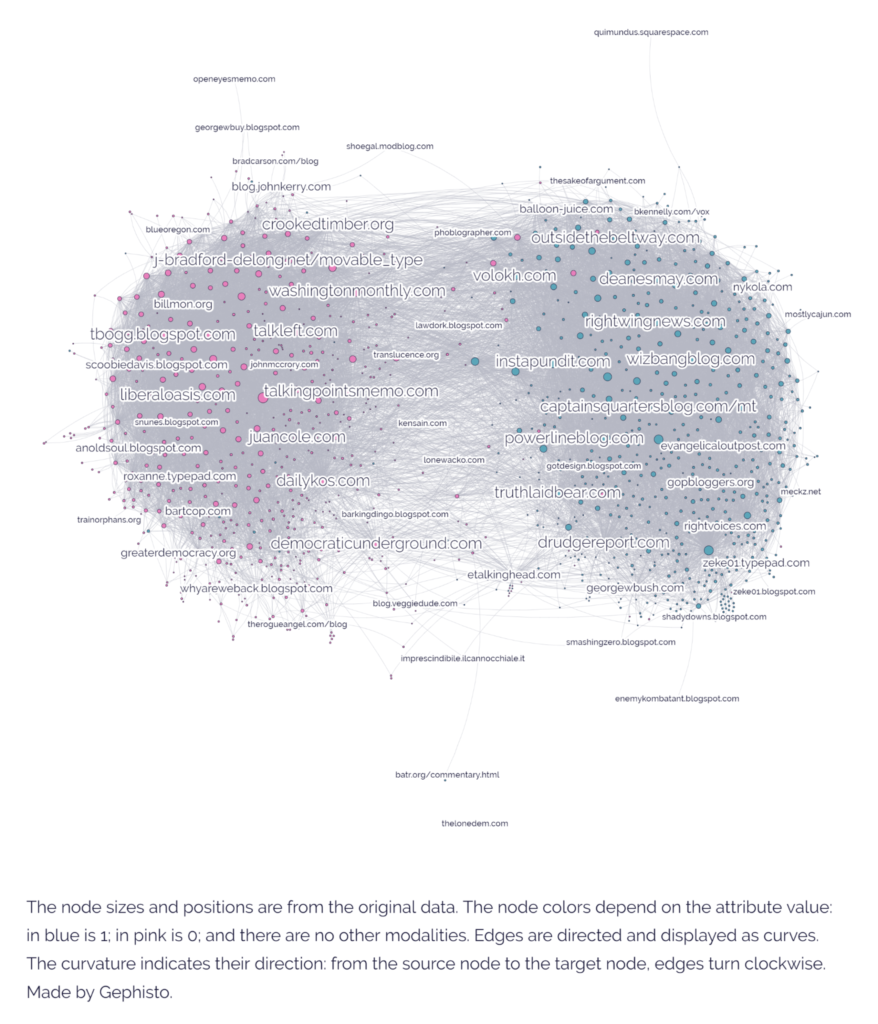

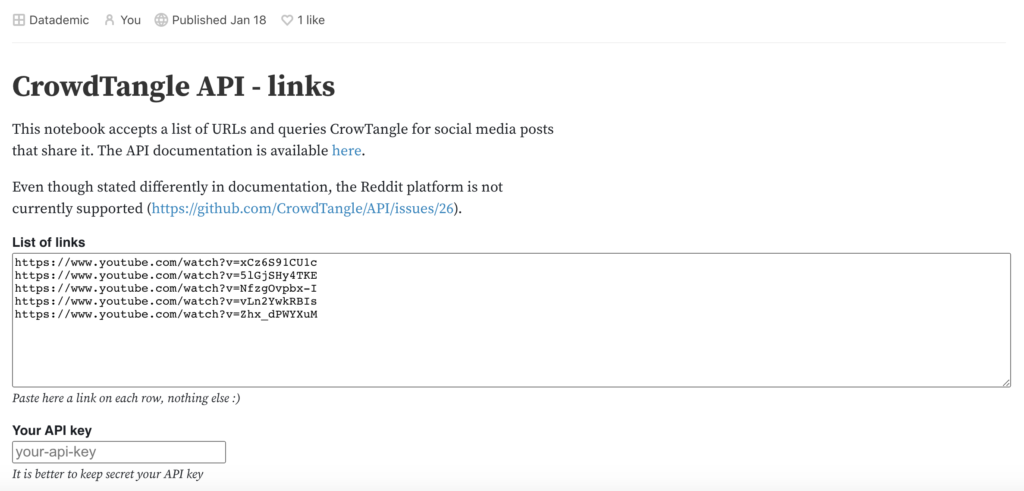

Social media platforms and online services can reveal how forests, rivers, mountains, animals — and human encounters with them — are seen, valued, and contested in near real-time. This isn’t without its challenges. Online platforms shape what we see and whose voices are amplified. While they can’t be treated as neutral reflections of how we relate to the environment, when taken as indications rather than comprehensive or unbiased sources, they can be useful leads for climate reporting.

This piece is the first of a two-part series with critical considerations for climate data journalists interested in how online activity about environmental issues can reveal new story angles and generate evidence to support on-the-ground reporting. This first piece focuses on practical tips for searching and analysing digital data, while the second explores how to add depth and context to those online findings.

The checklist series draws on findings from our research on online engagement with forest restoration, carried out as part of the EU-funded SUPERB project. As part of the project, we looked at online activity across five digital platforms linked to twelve European forest sites where SUPERB is working on ecological restoration. The insights gathered here aim to support climate reporting with and about digital platforms, considering both the opportunities and limitations these sources offer to journalists.

Continue reading