Soundscapes as method

How can soundscapes be used as a way to attend to forest life and the many different ways that we narrate and relate to forests, forest issues and forest protection and restoration efforts?

Forests and their wider ecologies are presented not only as sites of conservation and relaxation, but also as crucial infrastructures in addressing and building resilience against the effects of climate change; habitats for endangered species; hotspots of biodiversity; part of poverty alleviation programmes; sites for ecotourism, health and wellbeing; scenes of neocolonial afforestation; backdrops for corporate greenwashing; landscapes of danger, violence, destruction and resource conflicts; and places where different kinds of planetary futures may emerge. Forests are involved in collective life in many ways.

In this context, the forestscapes project will explore, document and demonstrate generative arts-based methods for recomposing collections of sound materials to support “collective inquiry” into forests as living cultural landscapes. It aims to facilitate interdisciplinary exchanges between natural scientists, social scientists, arts and humanities researchers, artists and public-spirited organisations and institutions working on forest issues.

While many previous works have explored sound as a medium for sensory immersion, (e.g. field recordings), forestscapes explores how recomposing sound material may explore forests as mediatised and contested cultural landscapes: diverse sites of many different (and marginalised) kinds of beings, relations, histories and representations. As part of the project we will co-create new sound works, as well as generative composition techniques using open source software and hardware.

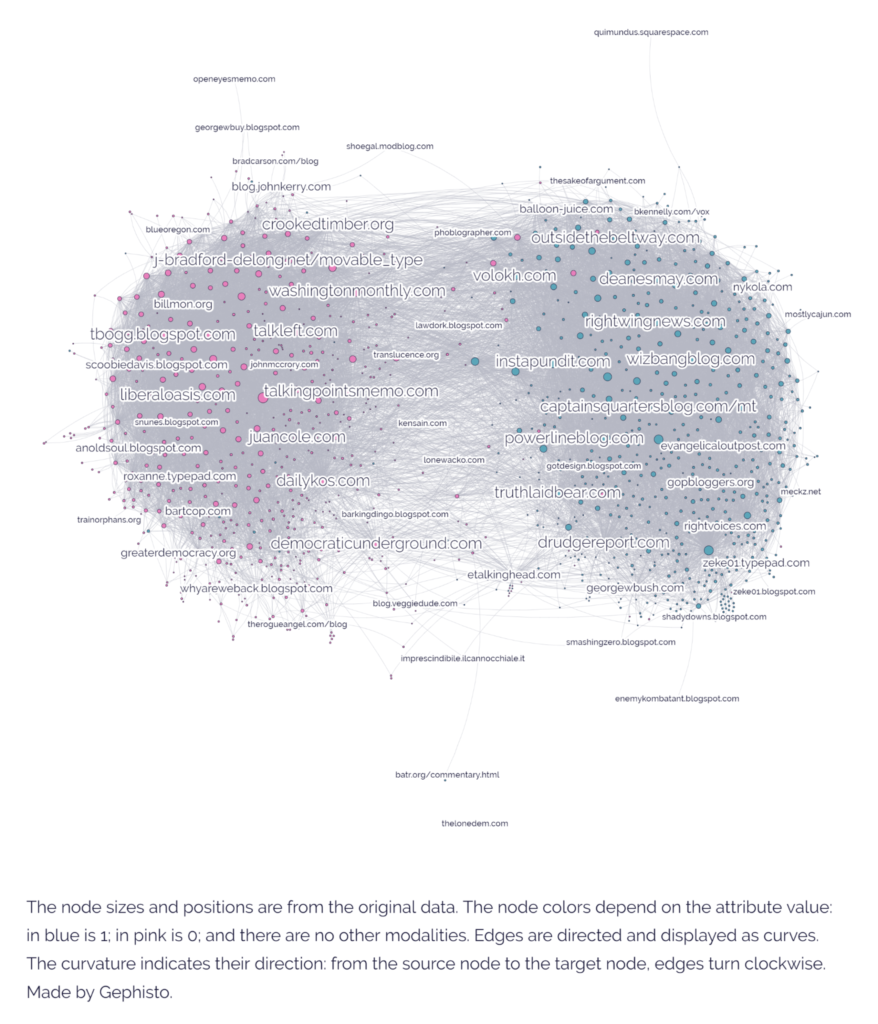

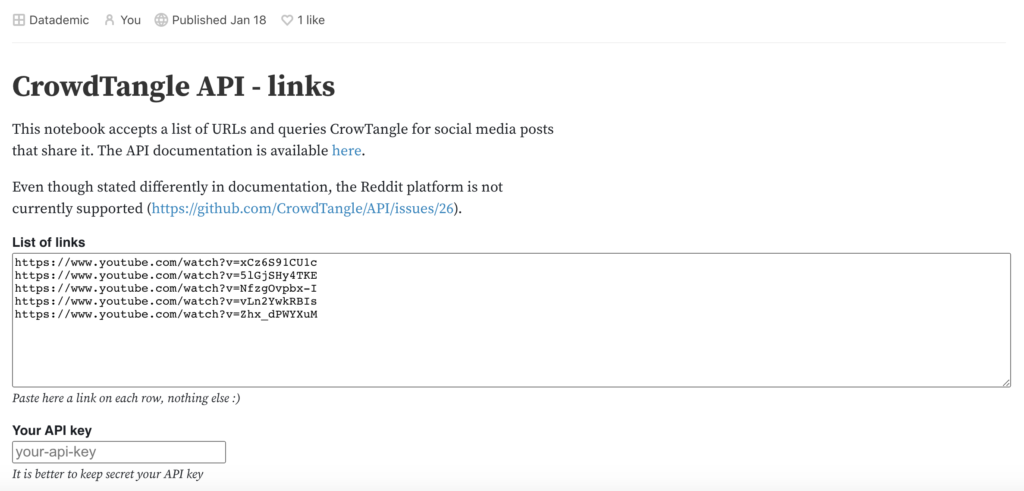

Research on visual methods has explored how to work with “folders of images”, including formats for the re-arrangement of images for collective interpretation. Forestscapes will explore generative methods and techniques for working with “folders of sound” – whether folders of site-based recordings or collections of sounds associated with a particular place gathered from the web and social media.

Further details and materials from the project will be added here.

Call for folders of forest sounds

As part of the project we have an open call for folders of forest sounds. If you have a collection of forest sounds related to a particular site and you’d be interested in exploring soundscaping techniques, we’d love to hear from you.

What? We welcome sounds collected in different contexts, i.e. research projects involving forests in some ways (e.g. ecological restoration, study of climate change impacts, fieldwork, etc); sounds recorded during walks and trips; as well as material collected online.

How? You can tell us about your folders of forest sounds here.

Who? We’re keen to hear from everyone with collections of forest sounds – whether you’re a forest scientist with bioacoustic recordings; an environmental organisation exploring sound as a medium of community engagement; a new media researcher gathering online materials; an ethnographer working with sound materials; a musician working with field recordings from a particular forest site; an artist interested in generative compositional techniques with ecological sounds; or a walker who has gathered a collection of sounds from a forest you often go to.

When? Please submit your files by 17th March 2023.

The forestscapes project is a collaboration between the Department of Geography, the Department of Digital Humanities, the Centre for Digital Culture, the Centre for Attention Studies, the Digital Futures Institute and the Environmental Humanities Network at King’s College London, together with the Public Data Lab. It is supported by the National Environmental Research Council.